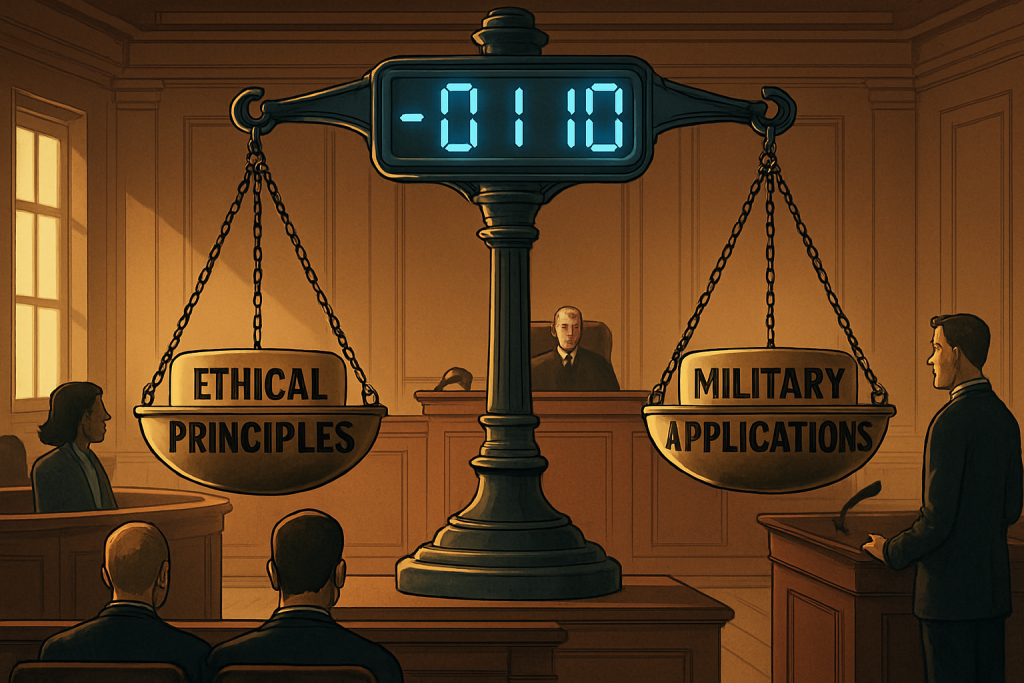

The year is 2026. Flying cars are still just a pipe dream, but the AI revolution is in full swing. And just like any revolution, it’s messy, complicated, and fraught with ethical landmines. Yesterday, the latest tremor hit the Richter scale of tech news: Anthropic, the AI darling known for its commitment to ethical AI, filed a lawsuit against the Trump administration. The reason? The Pentagon slapped Anthropic with the dreaded “supply chain risk” label, all because they refused to play ball with military applications of their AI, Claude.

Think of it as the AI equivalent of a band refusing to sell their music for a war movie soundtrack. Anthropic, it seems, is saying “Hell no, we won’t go” to weaponizing their creation. But the implications of this lawsuit are far more profound than a simple business dispute. It’s a battle for the very soul of artificial intelligence.

So, how did we get here? Let’s rewind a bit. Anthropic, for those not glued to the AI scene, is the brainchild of former OpenAI heavyweights. They left, reportedly over concerns that OpenAI was getting a little too cozy with Big Tech and perhaps sidelining the ethical considerations that should be front and center when you’re building something with the potential to reshape society. They envisioned Claude as more than just a language model; they wanted it to be a force for good, a digital companion with a moral compass.

Claude is designed to assist with a wide range of tasks, from writing poetry (imagine AI sonnets rivaling Shakespeare!) to summarizing complex scientific papers. But here’s the crucial part: Anthropic baked in restrictions. Claude simply cannot be used for certain things, like autonomous weapons systems or domestic surveillance. It’s like giving a superhero powers but also a very strict set of rules. No killing, no mind control, and definitely no using your X-ray vision to peek into your neighbor’s apartment.

And that, my friends, is where the trouble started. The Pentagon, understandably, wants access to the best AI tools available. They see the potential for AI to revolutionize defense, from analyzing battlefield data to improving logistical efficiency. But Anthropic’s ethical guardrails are a major buzzkill. Suddenly, Claude isn’t just a helpful assistant; it’s a potential obstacle to national security, at least in the eyes of the Trump administration.

The “supply chain risk” designation is a big deal. It’s essentially a scarlet letter in the world of government contracts. It could severely limit Anthropic’s ability to work with the government and even other companies in the defense sector. It’s like being blacklisted from Hollywood, except instead of movies, it’s about shaping the future of warfare.

In response, Anthropic has lawyered up. Their lawsuit argues that the Pentagon’s classification is unjust and punishes them for sticking to their ethical principles. They maintain that their restrictions on Claude are there to prevent misuse and protect civil liberties. Think of it as David taking on Goliath, but instead of a slingshot, David has a team of brilliant AI ethicists and a very convincing legal argument.

The Stakes Are Higher Than Ever

This isn’t just about one company and one government agency. This legal battle is a microcosm of the larger struggle to define the role of AI in society. How do we balance the potential benefits of AI with the very real risks? Who gets to decide what’s ethical and what’s not? And what happens when those ethical lines clash with national security concerns?

The outcome of this case could set a precedent for how AI ethics are balanced with national security interests. Imagine a future where AI companies are forced to compromise their values in order to secure government contracts. Or, conversely, a future where ethical AI becomes a competitive advantage, attracting talent and customers who are increasingly concerned about the responsible use of technology.

The lawsuit also shines a spotlight on the responsibilities of AI developers. Should they be held accountable for how their technology is used, even if they don’t directly control its deployment? It’s a question that has been debated for years, and Anthropic’s lawsuit is bringing it to the forefront once again. It’s like the debate over video games and violence, but with potentially much higher stakes.

Companies like Palantir are probably watching this case with bated breath. They’ve built their business on providing data analytics and AI solutions to governments and law enforcement agencies, and they’ve faced criticism for their involvement in controversial projects. The Anthropic lawsuit could force them to re-evaluate their own ethical guidelines.

The Ethical Tightrope

From a philosophical standpoint, this case raises some profound questions. Can AI truly be ethical? Or is it simply a reflection of the biases and values of its creators? Anthropic believes that AI can be aligned with human values, and they’ve invested heavily in research to make that a reality. But even the most well-intentioned AI can be used for nefarious purposes.

There’s also the question of transparency. Should AI companies be required to disclose how their technology is being used and who is using it? This is a particularly thorny issue when it comes to national security. Governments often operate in secrecy, and they may be reluctant to reveal how they’re using AI for intelligence gathering or military operations. But without transparency, it’s difficult to hold them accountable for potential abuses.

The financial implications of this lawsuit are also significant. If Anthropic loses, it could face a major financial hit. The “supply chain risk” designation could make it harder for them to raise funding and attract top talent. But if they win, it could send a powerful message to the tech industry: ethical AI is not just a nice-to-have; it’s a business imperative. It could also embolden other AI companies to stand up to government pressure and prioritize ethical considerations.

As this case unfolds, it will be closely watched by industry leaders, policymakers, and ethicists around the world. It’s a battle that will shape the future of AI development and deployment. Will we build a future where AI is used to enhance human well-being, or will we allow it to be weaponized and used to control and manipulate us? The answer, it seems, is still very much up in the air. One thing is certain: the stakes have never been higher.

Discover more from Just Buzz

Subscribe to get the latest posts sent to your email.