The year is 2026. Flying cars are still stubbornly absent (thanks, Elon!), but artificial intelligence is woven into the fabric of our lives, from personalized medicine to predicting the next viral TikTok dance. But with great power comes great responsibility- and, inevitably, great controversy. Yesterday, that controversy landed squarely on OpenAI’s doorstep with the resignation of a senior robotics executive.

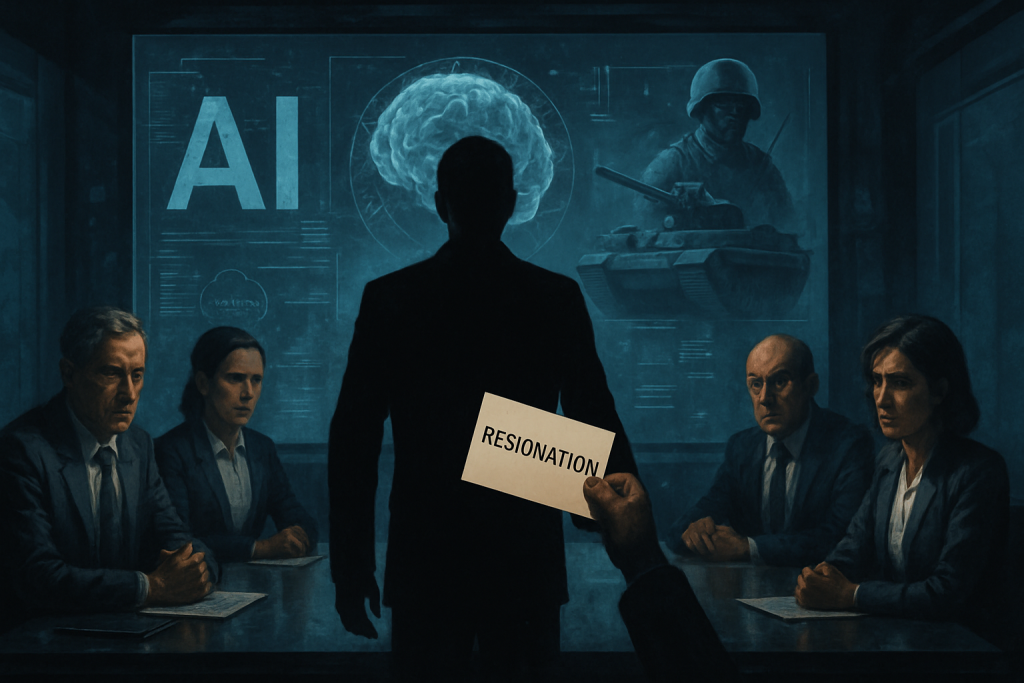

Imagine the scene: a tense boardroom, hushed whispers, and the weight of a decision that could reshape the future of AI. The anonymous executive, a veteran of the AI wars, walked away from it all, driven by a profound ethical conflict. The reason? OpenAI’s recent defense contract with the U.S. Department of Defense. It’s a plot ripped straight from a Philip K. Dick novel, but this time, it’s reality.

But how did we get here? Let’s rewind a bit.

In February 2026, the ink dried on a deal that sent ripples through Silicon Valley. OpenAI, the darling of the AI world, the company that promised to unlock artificial general intelligence for the betterment of humanity, partnered with the Pentagon. This wasn’t just about streamlining paperwork; it was about deploying OpenAI’s cutting-edge AI for national defense. Think smarter drones, enhanced cybersecurity, and maybe even AI-powered strategic analysis. The possibilities, both exciting and terrifying, are endless.

Interestingly, OpenAI wasn’t the only AI firm approached. Anthropic, another major player in the AI arena, reportedly turned down a similar offer, citing their own ethical concerns. It’s a move that positioned them as the conscientious objector of the AI world, a David facing the Goliath of government contracts. OpenAI CEO Sam Altman, ever the pragmatist, took to X (formerly Twitter, but let’s be honest, everyone still calls it Twitter) to defend the partnership, emphasizing OpenAI’s commitment to responsible AI development and deployment. He painted a picture of ethical safeguards and rigorous oversight. But was it enough?

Fast forward to March 8th, and the other shoe dropped. The senior robotics executive, a key player in OpenAI’s ambitious robotics program, tendered their resignation. The un-named individual’s statement, while carefully worded, was a clear indictment of OpenAI’s new direction. Concerns about the potential for AI-powered warfare and pervasive domestic surveillance were at the heart of the decision. This wasn’t just a difference of opinion; it was a fundamental clash of values. The executive felt that OpenAI had strayed too far from its original mission: to ensure that AGI benefits all of humanity, not just a select few with access to military budgets.

The Fallout: A Tech Community Divided

The resignation has ignited a firestorm of debate within the tech community. It’s a conversation that’s been brewing for years, but this event has brought it to a boil. On one side, you have the pragmatists, who argue that AI is a tool, and like any tool, it can be used for good or evil. They believe that collaboration with the military is essential for national security and that responsible development can mitigate the risks. They might point to advancements in disaster relief or search and rescue operations made possible by AI. Think of the AI in “Iron Man” helping save lives. On the other side, you have the purists, who fear that AI-powered weapons systems and surveillance technologies are a slippery slope to a dystopian future. They worry about autonomous weapons making life-or-death decisions without human intervention, and about the erosion of privacy in an age of ubiquitous surveillance. They might reference “Terminator” or “Minority Report” as cautionary tales.

The public reaction has been equally divided. Social media is ablaze with hashtags like #AIforPeace and #TechEthics, as people grapple with the implications of OpenAI’s decision. Some are hailing the resigning executive as a hero, a whistleblower who dared to stand up to corporate greed. Others are accusing them of naivete, arguing that national security is paramount and that OpenAI is simply doing its part to protect the country.

OpenAI’s Defense: Ethics and Oversight

OpenAI, for its part, is standing firm. The company has reiterated its commitment to ethical AI development, emphasizing that its partnership with the Pentagon includes strict guidelines to ensure responsible use. They claim that their technologies will be deployed in accordance with international laws and human rights standards. OpenAI also highlights its ongoing efforts to engage with stakeholders, address ethical concerns, and maintain transparency in its operations. They are trying to strike a delicate balance: reassuring the public that they are not building Skynet while also fulfilling their obligations to the U.S. government. It’s a tightrope walk, to say the least.

The Bigger Picture: Regulation and the Future of AI

This controversy is more than just a corporate drama; it’s a reflection of the broader challenges facing the AI industry. As AI becomes more powerful and pervasive, questions of regulation and oversight are becoming increasingly urgent. Should there be stricter rules governing the use of AI in military applications? Should AI companies be held liable for the actions of their technologies? Should there be international agreements to prevent an AI arms race? These are the questions that policymakers around the world are grappling with.

The financial implications are also significant. OpenAI’s stock (if it were publicly traded; as of 2026 it still isn’t) would likely experience volatility as investors weigh the potential benefits of government contracts against the reputational risks of ethical controversies. Other AI companies will be watching closely, trying to gauge the public mood and adjust their own strategies accordingly. The AI industry is at a crossroads, and the choices it makes in the coming years will have a profound impact on the future of humanity. Will we choose a path of responsible innovation, guided by ethical principles and a commitment to the common good? Or will we succumb to the temptations of power and profit, unleashing a Pandora’s Box of unintended consequences?

The resignation of OpenAI’s senior robotics executive serves as a stark reminder of the complex ethical challenges facing the AI industry. It underscores the need for ongoing dialogue, clear ethical frameworks, and robust regulatory oversight. The future of AI is not predetermined; it is up to us to shape it. Let’s hope we choose wisely.

Discover more from Just Buzz

Subscribe to get the latest posts sent to your email.